All Categories

Featured

Table of Contents

Amazon now commonly asks interviewees to code in an online document data. Now that you understand what concerns to expect, let's focus on exactly how to prepare.

Below is our four-step prep prepare for Amazon data scientist candidates. If you're planning for more companies than simply Amazon, then check our general information scientific research interview preparation overview. Many prospects fall short to do this. Before investing 10s of hours preparing for a meeting at Amazon, you ought to take some time to make sure it's in fact the best company for you.

, which, although it's developed around software application development, must offer you a concept of what they're looking out for.

Keep in mind that in the onsite rounds you'll likely have to code on a white boards without being able to perform it, so practice composing via troubles on paper. For device learning and statistics concerns, uses on-line training courses designed around statistical probability and other valuable topics, some of which are totally free. Kaggle Uses free courses around initial and intermediate device discovering, as well as information cleansing, data visualization, SQL, and others.

Preparing For Technical Data Science Interviews

Make certain you contend least one tale or example for each of the principles, from a variety of positions and projects. A terrific means to exercise all of these different kinds of inquiries is to interview yourself out loud. This may seem strange, yet it will dramatically improve the method you connect your responses throughout a meeting.

Depend on us, it works. Practicing by on your own will just take you thus far. One of the main obstacles of information researcher meetings at Amazon is connecting your various responses in a manner that's easy to recognize. Because of this, we strongly recommend exercising with a peer interviewing you. Ideally, a wonderful place to start is to exercise with friends.

They're unlikely to have expert expertise of interviews at your target business. For these reasons, several prospects miss peer mock interviews and go directly to mock meetings with an expert.

Designing Scalable Systems In Data Science Interviews

That's an ROI of 100x!.

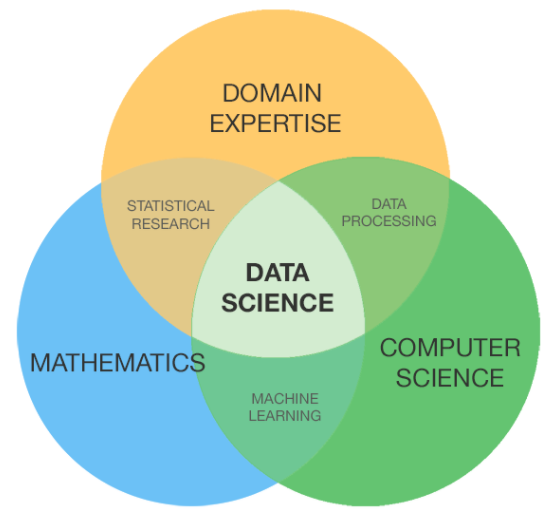

Typically, Data Scientific research would concentrate on maths, computer system science and domain name competence. While I will quickly cover some computer system science fundamentals, the bulk of this blog will primarily cover the mathematical essentials one may either require to brush up on (or even take a whole program).

While I understand a lot of you reading this are more mathematics heavy by nature, realize the bulk of information science (risk I state 80%+) is gathering, cleansing and handling information into a useful type. Python and R are one of the most popular ones in the Data Science area. I have actually additionally come across C/C++, Java and Scala.

Data Engineer End To End Project

It is common to see the majority of the information researchers being in one of two camps: Mathematicians and Database Architects. If you are the 2nd one, the blog won't aid you much (YOU ARE ALREADY AWESOME!).

This could either be accumulating sensor data, analyzing internet sites or lugging out surveys. After collecting the data, it requires to be changed right into a usable type (e.g. key-value store in JSON Lines files). As soon as the data is accumulated and placed in a useful format, it is crucial to carry out some data top quality checks.

Statistics For Data Science

Nonetheless, in cases of scams, it is extremely common to have hefty class discrepancy (e.g. only 2% of the dataset is actual scams). Such information is necessary to pick the appropriate selections for feature design, modelling and version assessment. To learn more, check my blog site on Fraudulence Detection Under Extreme Class Inequality.

In bivariate evaluation, each feature is compared to other features in the dataset. Scatter matrices allow us to locate concealed patterns such as- attributes that should be crafted with each other- functions that may require to be gotten rid of to stay clear of multicolinearityMulticollinearity is in fact an issue for multiple designs like straight regression and thus needs to be taken care of appropriately.

Imagine utilizing internet use information. You will have YouTube individuals going as high as Giga Bytes while Facebook Carrier users utilize a couple of Mega Bytes.

Another concern is using categorical worths. While categorical worths are common in the data scientific research world, understand computer systems can just understand numbers. In order for the categorical values to make mathematical sense, it needs to be changed into something numerical. Generally for specific worths, it prevails to do a One Hot Encoding.

Python Challenges In Data Science Interviews

At times, having a lot of sparse dimensions will hamper the performance of the version. For such circumstances (as frequently done in image recognition), dimensionality reduction formulas are utilized. An algorithm generally made use of for dimensionality reduction is Principal Components Evaluation or PCA. Find out the mechanics of PCA as it is also among those topics among!!! For additional information, examine out Michael Galarnyk's blog site on PCA making use of Python.

The common classifications and their below classifications are explained in this area. Filter techniques are usually made use of as a preprocessing action.

Common methods under this category are Pearson's Relationship, Linear Discriminant Evaluation, ANOVA and Chi-Square. In wrapper approaches, we try to utilize a subset of features and educate a model using them. Based on the inferences that we attract from the previous design, we decide to include or get rid of features from your subset.

Behavioral Questions In Data Science Interviews

These approaches are usually computationally very expensive. Typical techniques under this category are Forward Choice, Backward Removal and Recursive Function Elimination. Embedded approaches incorporate the qualities' of filter and wrapper approaches. It's carried out by formulas that have their own built-in feature choice methods. LASSO and RIDGE prevail ones. The regularizations are given in the formulas listed below as referral: Lasso: Ridge: That being stated, it is to comprehend the auto mechanics behind LASSO and RIDGE for interviews.

Monitored Understanding is when the tags are readily available. Not being watched Learning is when the tags are inaccessible. Obtain it? Monitor the tags! Word play here intended. That being said,!!! This blunder is sufficient for the recruiter to cancel the interview. Another noob mistake people make is not stabilizing the attributes before running the design.

Direct and Logistic Regression are the many standard and frequently utilized Maker Learning algorithms out there. Prior to doing any type of evaluation One common meeting mistake people make is beginning their evaluation with an extra complicated version like Neural Network. Benchmarks are vital.

Latest Posts

The Most Difficult Technical Interview Questions Ever Asked

The Best Free Coding Interview Prep Courses In 2025

Why Whiteboarding Interviews Are Important – And How To Ace Them